Camper Solar and Battery Upgrade

(May 2024)

My 2010 Four Wheel Camper Hawk came with an old AGM battery that caught fire. So I needed to replace it. After doing some research, and after determining my average and peak loads, I settled on an Epoch 300ah LiFePO4 battery. And I added 400W of solar to the roof, along with a new Victron Solar Charge Controller. I had to upgrade the alternator relay to a proper DC-DC charger. Now I can run my camper for the entire trip and not have to worry about anything not working. Next to upgrade is the gas struts to offset the new weight on the roof, upgrade to LED lighting, I want to upgrade the table to something more managable, and my wife says she needs her coffee maker, so I need to add a 2000W inverter.

Custom Sit/Stand Desk

(August 2017)

Designed and constructed a sit/stand desk. Researched various designs to create my own custom design in SketchUp. The desk includes four LED buttons, a screen (not yet implemented), and a joystick. The buttons are used to navigate the menu system that will be displayed on the screen. The joystick is used as a headphone hanger. The desk has a manual and automatic mode for adjusting the height of the desk. Manual lets the user directly change the height while automatic mode will alternate between two given heights at a given interval. There are also preset modes for saving, loading, and deleting desk heights.

WalkVR

Cal Hacks 2.0 (October 2015)

Created a full body VR experience using the Oculus Rift DK2, Leep Motion, and Myo Armband. The Leep Motion tracked hand gestures while the Myo Armband was used around the ankle to track walking.

WalkVR Devpost

WalkVR GitHub

ObjectRekt

Flir Hackathon (June 2015)

ObjectRekt uses the FLIR Lepton, a longwave infrared thermal imager, along with OpenCV object recognition to create an automated camera that observes the scene and tracks a presenter's location, panning to the proper locations. The Lepton sensor and the Rasperry-Pi's camera were attached to a 3D printed servo mount.

ObjectRekt Devpost

ObjectRekt GitHub

SlugTrails

HackUCSC (January 2015)

SlugTrails in an app for Android and iPhone that aims to track wildlife spottings. When the user spots a wild animal, they would tag the animal using this app which then enters the spotting into a database. After tagging a spotting, the user can visualize tagged data in a map or list view.

SlugTrails Devpost

SlugTrails GitHub

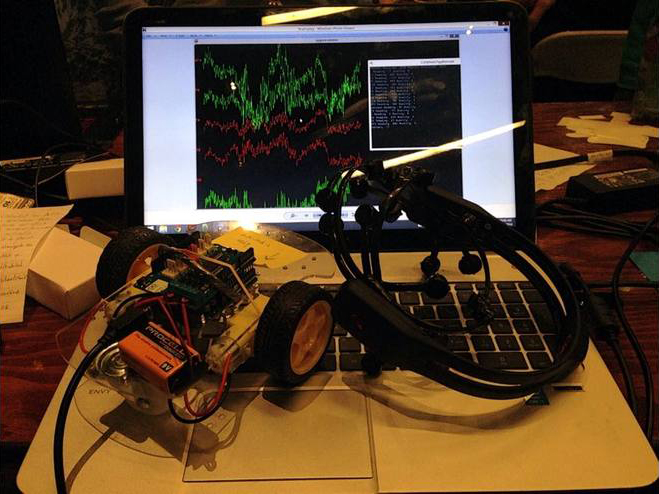

EmoCar

Cal Hacks (October 2014)

Mind-controlled Arduino Uno Rover using an Emotiv EPOC neroheadset. By readding raw EEG data from the headset, we were able to isolate and understand what part of the brain is responsible for spacial thinking (parietal lobe). Used machine learning in order to train a threshold for the brain activity which allowed us to create a "GO" command to the rover.

EmoCar Devpost

EmoCar GitHub

Hartbeat

Alpha Game Jam (September 2014)

A heart rate based FPS game made using Unrealscript and UDK. Using an optical heart rate sensor and an Arduino, we gathered heart rate information which then was used to vary the player's spread radius in-game.

Hartbeat Devpost

Hearbeat GitHub

findAR

Hero Hacks (August 2014)

An Oculus Rift augmented reality application that allows the user to view their world through various filters such as grey-scale, spia, black/white, outline, and hue gradient. It also has color picker and facial recognition modes. The color picker mode filters out all other colors besides the selected color. The facial recognition mode uses machine learning and a small database of photos to match faces to the photos in the database and display the name.